Musk hints Twitter's bird branding could be replaced

Twitter owner Elon Musk hinted late Saturday night that he may ditch the social media network's blue cartoon bird branding -- and soon -- for an edgier logo based on an "everything app" he...

2023-07-23 21:50

Elon Musk reacts angrily to criticism for giving in to governments’ Twitter censorship demands

Twitter boss Elon Musk, who has often touted himself as a champion of free speech, said he had no "actual choice" when accused of caving in to censorship demands made by authoritarian governments. Since the billionaire's takeover in October last year, Twitter has approved 83 per cent more censorship requests from governments such as Turkey and India, El Pais reported. The company reportedly received 971 requests from governments, fully acceding to 808 of them and partially acceding to 154. The year prior to Mr Musk taking control, Twitter agreed to 50 per cent of such requests, which was in line with the compliance rate indicated in the company’s last transparency report. The report, shared by Bloomberg columnist Matthew Yglesias, evoked an angry reaction from Mr Musk. Mr Yglesias tweeted the report with the caption "I’m a free speech absolutist", quoting the Twitter boss. The world's second-richest person shot back, writing: "You're such a numbskull. Please point out where we had an actual choice and we will reverse it." The columnist responded: "Look, I’m not the one who bought Twitter amidst a blaze of proclamations about free speech principles. "Obviously you’re within your rights to run your business however you want." Mr Musk has repeatedly reiterated his backing for free speech both before and since the $44bn acquisition of Twitter. The “absolutist” quote refers to a tweet in March 2022 in the wake of Vladimir Putin’s unprovoked invasion of Ukraine. "Starlink has been told by some governments (not Ukraine) to block Russian news sources. We will not do so unless at gunpoint," Mr Musk tweeted. "Sorry to be a free speech absolutist." Yet Twitter has been accused of helping incumbent Turkish president Recep Tayyip Erdogan stifle criticism by blocking several accounts in the two days before the country’s hotly contested general election. “In response to legal process and to ensure Twitter remains available to the people of Turkey, we have taken action to restrict access to some content in Turkey today,” Twitter’s global government affairs announced, without explaining which tweets would be blocked. Following severe criticism, Mr Musk alleged Twitter has “pushed harder for free speech than any other internet company, including Wokipedia”. Earlier this year in India, Twitter complied after Narendra Modi’s government used emergency powers to ban content related to a BBC documentary on social media. The two-part documentary included a previously unpublished report from the UK Foreign Office that held Mr Modi “directly responsible” for the “climate of impunity” that enabled communal violence in Gujarat state. The riots in February 2002 killed over 1,000 people – most of them Muslims – while Mr Modi was chief minister of the state. Justifying the consent Mr Musk said: "The rules in India for what can appear on social media are quite strict, and we can’t go beyond the laws of a country." He said doing so would put his staff at risk. “If we have a choice of either our people going to prison or us complying with the laws, we will comply with the laws.” Read More Elon Musk tweets quote by neo-Nazi wrongly attributed to Voltaire Erdogan declared winner of Turkey presidential run-off – extending his 20 years in power India uses emergency powers to ban anyone from sharing clips of BBC Modi documentary Elon Musk tweets quote by neo-Nazi wrongly attributed to Voltaire Elon Musk’s Neuralink brain chip company gets FDA approval for human testing AOC jokes more people watched her gaming online than listened to DeSantis launch

2023-05-29 13:21

HSBC down: App and website offline amid Black Friday sales

HSBC’s app and mobile banking website are down on one of the biggest shopping days of the year. The outage came on the morning of Black Friday, when most large retailers offer significant sales – and customers are even more likely to be checking their balance. Instead, the app showed an error message indicating that service was unavailable. The bank said it was urgently working to fix the issue, which appeared to bring problems across its services. “We understand some customers are having trouble accessing banking services as usual right now,” HSBC said in a statement. “We’re investigating this as a matter of urgency and will share an update as soon as possible.” HSBC offers a status page but it appears to have not been updated. It showed that all services were operating normally – despite the company’s statement otherwise. Those who bank with First Direct may also be affected, since it is a part of HSBC. Read More Users of iPhones can now check bank balance from Wallet app Nasa has received a signal from 10 million miles away Bitcoin mining rate hits all-time high amid record-breaking prediction for 2024

2023-11-24 17:47

AI that alters voice and imagery in political ads will require disclosure on Google and YouTube

Political ads using artificial intelligence on Google and YouTube must soon be accompanied by a prominent disclosure if imagery or sounds have been synthetically altered

2023-09-08 01:16

Siemens Energy Talks on Loan Guarantees Are Still Ongoing

Siemens Energy AG’s talks with the German government and main shareholder Siemens AG on billions in loan guarantees

2023-11-10 01:23

Scientists discover that people who live past 90 have key differences in their blood

Centenarians have become the fastest-growing demographic group in the world, with numbers approximately doubling every 10 years since the 1970s. Many researchers have sought out the factors and contributors that determine a long and healthy life. The dissolution isn't new either, with Plato and Aristotle writing about the ageing process over 2,300 years ago. Understanding what is behind living a longer life involves unravelling the complex interplay of genetic predisposition and lifestyle factors and how they interact. In a recent study published in GeroScience, researches have unveiled common biomarkers, including levels of cholesterol and glucose, in people who live past 90. The study is one of the largest that has been conducted in this area, comparing biomarker profiles measured throughout life among those who lived to be over the age of 100 and their shorter-lived peers. Data came from 44,000 Swedes who underwent health assessments at ages 64-99. These participants were then followed through Swedish register data for up to 35 years. Of these people, 2.7 percent (1,224) lived to be 100 years old. 85 percent of these centenarians were female. The study's findings conduced that lower levels of glucose, creatinine - which is linked to kidney function and uric acid, a waste product in the body caused by the digestion of certain foods - were linked to those who made it to their 100th birthday. The findings suggest a potential link between metabolic health, nutrition, and exceptional longevity. In terms of lifestyle factors, the study didn't allow for any conclusions to be made, but the authors of the study added that it's reasonable for factors such as nutrition and alcohol intake play a role. Overall, the fact that differences in biomarkers could be observed a long time before death suggests that genes and lifestyle play a role, but of course, chance likely has an input too. Sign up to our free Indy100 weekly newsletter Have your say in our news democracy. Click the upvote icon at the top of the page to help raise this article through the indy100 rankings.

2023-10-17 00:21

WHOOP Unlocks Doors at New Global Headquarters in Boston at “One Kenmore Square”

BOSTON--(BUSINESS WIRE)--Jul 13, 2023--

2023-07-13 21:25

Thyssenkrupp Gets EU Approval for €2 Billion Green Steel Aid

Thyssenkrupp AG secured European Union approval for a €2 billion ($2.2 billion) package in state subsidies from the

2023-07-20 18:29

HYFIX.AI Launches New RTK Rovers With Quectel LC29H GNSS Module on CrowdSupply

PALO ALTO, Calif.--(BUSINESS WIRE)--Jun 14, 2023--

2023-06-15 00:23

BlackRock Tries for Spot-Bitcoin ETF With Fresh Filing

BlackRock Inc. is trying its hand at potentially getting the first spot-Bitcoin exchange-traded fund launched in the US.

2023-06-17 02:51

Play over 100,000 preloaded classic games on this $329 console

TL;DR: As of May 13, you can snag the Super Console X King Retro Game

2023-05-13 17:57

Meta's Zuckerberg shakes off Apple Vision Pro: report

Meta chief Mark Zuckerberg on Thursday told employees that while Apple's mixed reality gear may be nice, it is not his vision of the future...

2023-06-09 09:23

You Might Like...

Brandon Fugal Joins OmniTeq Board of Directors

Chip Designer Arm in Talks With Nvidia to Anchor IPO, FT Says

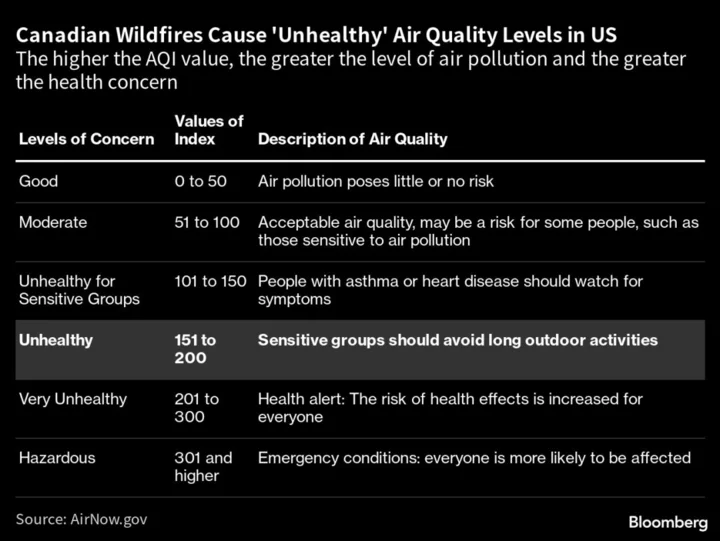

How Safe Is It to Go Outside and Other Wildfire Smoke Issues

EU Fails to Set Date Ending Fossil Fuel Subsidies Before COP28

Tired of Elon Musk? Here are the Twitter alternatives you should know about

Fortnite Purradise Meowscles Skin: Quests, How to Get

The Truth About Olive Garden’s “Unlimited“ Breadsticks Deal

Optimum Announces Elton Hart as Vice President, General Manager of Mid Atlantic Area